If I deploy a k8s cluster using the azurerm_kubernetes_cluster resource then I get a K8s cluster as expected.

If I then run another plan without making changes the plan output shows there are lots of things which are going to be updated, deleted, and changes.

If the plan is applied the cluster is deleted and redeployed and again if a plan is performed it shows the changes need making again and so it is planning to delete the cluster again.

Any thoughts? Should it be doing this?

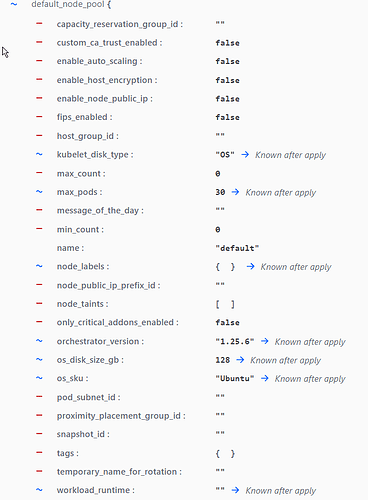

as an example here is the start of the plan output showing a lot of values which will change.

there is the same in the node pool

and so on and so on.

I can’t help thinking I’m doing something really wrong…

Silence… Somewhere in the distance a dog barked.

We found the answer in the end.

network_plugin_mode = “Overlay”

network_plugin_mode = “overlay”

The capitalised O in overlay was causing the cluster to be redeployed. This tiny difference was lost in the plan as the plan flagged hundreds of things which it said were needing to be deleted - The ‘O’ was the preverbial needle in the haystack. I don’t know if there is a clear and simple way to catch these things? As an aside there are plenty of tutorials and snippets out there which show the value as having a capital ‘O’. I’m guessing they must have worked when they were written so there must have been a change to the provider or to azure requiring lowecase strings only along the way? Who knows - anyway it’s fixed now